Google Professional Machine Learning Engineer Google Professional Machine Learning Engineer Online Training

Google Professional Machine Learning Engineer Online Training

The questions for Professional Machine Learning Engineer were last updated at Mar 30,2026.

- Exam Code: Professional Machine Learning Engineer

- Exam Name: Google Professional Machine Learning Engineer

- Certification Provider: Google

- Latest update: Mar 30,2026

As the lead ML Engineer for your company, you are responsible for building ML models to digitize scanned customer forms. You have developed a TensorFlow model that converts the scanned images into text and stores them in Cloud Storage. You need to use your ML model on the aggregated data collected at the end of each day with minimal manual intervention.

What should you do?

- A . Use the batch prediction functionality of Al Platform

- B . Create a serving pipeline in Compute Engine for prediction

- C . Use Cloud Functions for prediction each time a new data point is ingested

- D . Deploy the model on Al Platform and create a version of it for online inference.

You work for a global footwear retailer and need to predict when an item will be out of stock based on historical inventory data. Customer behavior is highly dynamic since footwear demand is influenced by many different factors. You want to serve models that are trained on all available data, but track your performance on specific subsets of data before pushing to production.

What is the most streamlined and reliable way to perform this validation?

- A . Use the TFX ModelValidator tools to specify performance metrics for production readiness

- B . Use k-fold cross-validation as a validation strategy to ensure that your model is ready for production.

- C . Use the last relevant week of data as a validation set to ensure that your model is performing accurately on current data

- D . Use the entire dataset and treat the area under the receiver operating characteristics curve (AUC ROC) as the main metric.

You work on a growing team of more than 50 data scientists who all use Al Platform. You are designing a strategy to organize your jobs, models, and versions in a clean and scalable way.

Which strategy should you choose?

- A . Set up restrictive I AM permissions on the Al Platform notebooks so that only a single user or group can access a given instance.

- B . Separate each data scientist’s work into a different project to ensure that the jobs, models, and versions created by each data scientist are accessible only to that user.

- C . Use labels to organize resources into descriptive categories. Apply a label to each created resource so that users can filter the results by label when viewing or monitoring the resources

- D . Set up a BigQuery sink for Cloud Logging logs that is appropriately filtered to capture information about Al Platform resource usage In BigQuery create a SQL view that maps users to the resources they are using.

During batch training of a neural network, you notice that there is an oscillation in the loss.

How should you adjust your model

Oscillation in the loss during batch to ensure that it converges?

- A . Increase the size of the training batch

- B . Decrease the size of the training batch

- C . Increase the learning rate hyperparameter

- D . Decrease the learning rate hyperparameter

You are building a linear model with over 100 input features, all with values between -1 and 1. You suspect that many features are non-informative. You want to remove the non-informative features from your model while keeping the informative ones in their original form.

Which technique should you use?

- A . Use Principal Component Analysis to eliminate the least informative features.

- B . Use L1 regularization to reduce the coefficients of uninformative features to 0.

- C . After building your model, use Shapley values to determine which features are the most informative.

- D . Use an iterative dropout technique to identify which features do not degrade the model when removed.

Your team has been tasked with creating an ML solution in Google Cloud to classify support requests for one of your platforms. You analyzed the requirements and decided to use TensorFlow to build the classifier so that you have full control of the model’s code, serving, and deployment. You will use Kubeflow pipelines for the ML platform. To save time, you want to build on existing resources and use managed services instead of building a completely new model.

How should you build the classifier?

- A . Use the Natural Language API to classify support requests

- B . Use AutoML Natural Language to build the support requests classifier

- C . Use an established text classification model on Al Platform to perform transfer learning

- D . Use an established text classification model on Al Platform as-is to classify support requests

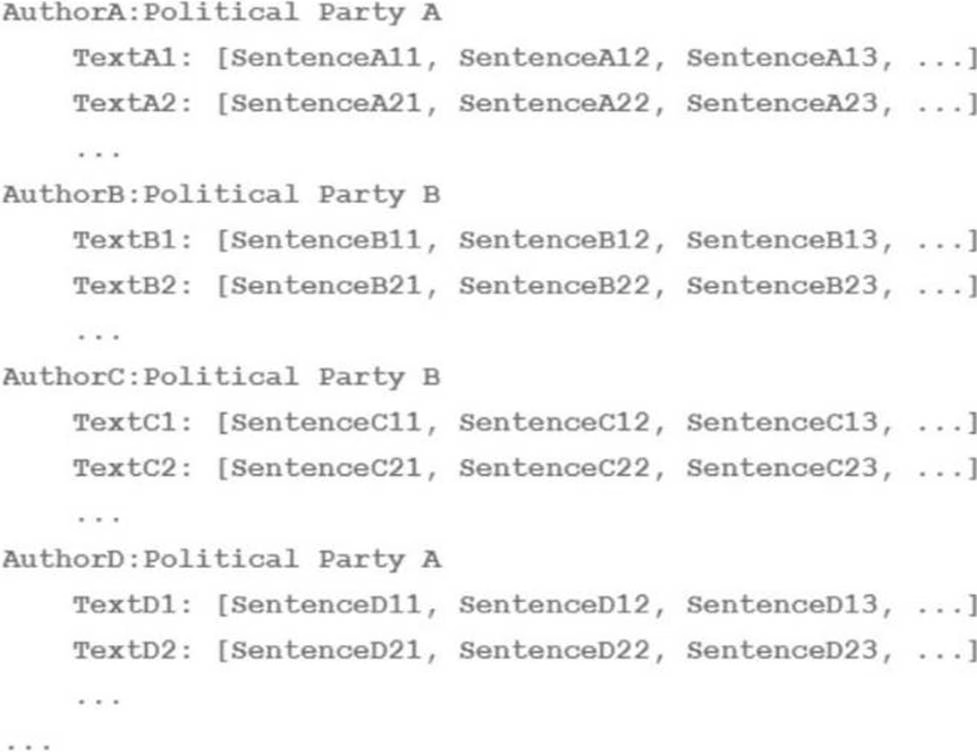

Your team is working on an NLP research project to predict political affiliation of authors based on articles they have written.

You have a large training dataset that is structured like this:

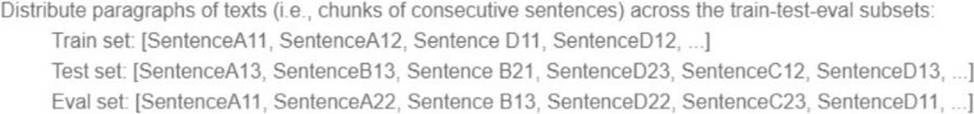

You followed the standard 80%-10%-10% data distribution across the training, testing, and evaluation subsets.

How should you distribute the training examples across the train-test-eval subsets while maintaining the 80-10-10 proportion?

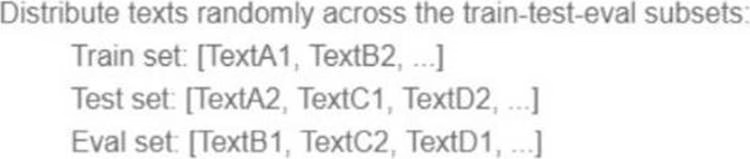

A)

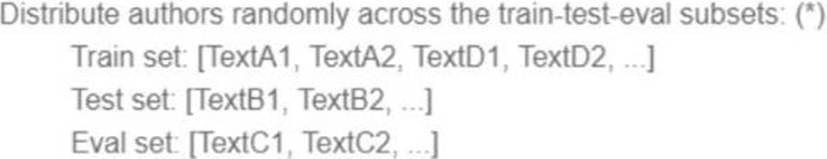

B)

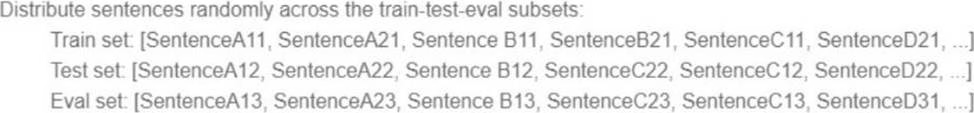

C)

D)

- A . Option A

- B . Option B

- C . Option C

- D . Option D

Your data science team needs to rapidly experiment with various features, model architectures, and hyperparameters. They need to track the accuracy metrics for various experiments and use an API to query the metrics over time.

What should they use to track and report their experiments while minimizing manual effort?

- A . Use Kubeflow Pipelines to execute the experiments Export the metrics file, and query the results using the Kubeflow Pipelines API.

- B . Use Al Platform Training to execute the experiments Write the accuracy metrics to BigQuery, and query the results using the BigQueryAPI.

- C . Use Al Platform Training to execute the experiments Write the accuracy metrics to Cloud Monitoring, and query the results using the Monitoring API.

- D . Use Al Platform Notebooks to execute the experiments. Collect the results in a shared Google

Sheets file, and query the results using the Google Sheets API

You are an ML engineer at a bank that has a mobile application. Management has asked you to build an ML-based biometric authentication for the app that verifies a customer’s identity based on their fingerprint. Fingerprints are considered highly sensitive personal information and cannot be downloaded and stored into the bank databases.

Which learning strategy should you recommend to train and deploy this ML model?

- A . Differential privacy

- B . Federated learning

- C . MD5 to encrypt data

- D . Data Loss Prevention API

You are building a linear regression model on BigQuery ML to predict a customer’s likelihood of purchasing your company’s products. Your model uses a city name variable as a key predictive component. In order to train and serve the model, your data must be organized in columns. You want to prepare your data using the least amount of coding while maintaining the predictable variables.

What should you do?

- A . Create a new view with BigQuery that does not include a column with city information

- B . Use Dataprep to transform the state column using a one-hot encoding method, and make each city a column with binary values.

- C . Use Cloud Data Fusion to assign each city to a region labeled as 1, 2, 3, 4, or 5r and then use that number to represent the city in the model.

- D . Use TensorFlow to create a categorical variable with a vocabulary list Create the vocabulary file, and upload it as part of your model to BigQuery ML.

Latest Professional Machine Learning Engineer Dumps Valid Version with 60 Q&As

Latest And Valid Q&A | Instant Download | Once Fail, Full Refund