Google Professional Data Engineer Google Certified Professional – Data Engineer Online Training

Google Professional Data Engineer Online Training

The questions for Professional Data Engineer were last updated at Feb 18,2026.

- Exam Code: Professional Data Engineer

- Exam Name: Google Certified Professional – Data Engineer

- Certification Provider: Google

- Latest update: Feb 18,2026

MJTelco needs you to create a schema in Google Bigtable that will allow for the historical analysis of the last 2 years of records. Each record that comes in is sent every 15 minutes, and contains a unique identifier of the device and a data record. The most common query is for all the data for a given device for a given day.

Which schema should you use?

- A . Rowkey: date#device_idColumn data: data_point

- B . Rowkey: dateColumn data: device_id, data_point

- C . Rowkey: device_idColumn data: date, data_point

- D . Rowkey: data_pointColumn data: device_id, date

- E . Rowkey: date#data_pointColumn data: device_id

MJTelco is building a custom interface to share data.

They have these requirements:

They need to do aggregations over their petabyte-scale datasets.

They need to scan specific time range rows with a very fast response time (milliseconds).

Which combination of Google Cloud Platform products should you recommend?

- A . Cloud Datastore and Cloud Bigtable

- B . Cloud Bigtable and Cloud SQL

- C . BigQuery and Cloud Bigtable

- D . BigQuery and Cloud Storage

You need to compose visualization for operations teams with the following requirements:

Telemetry must include data from all 50,000 installations for the most recent 6 weeks (sampling once every minute)

The report must not be more than 3 hours delayed from live data.

The actionable report should only show suboptimal links.

Most suboptimal links should be sorted to the top.

Suboptimal links can be grouped and filtered by regional geography.

User response time to load the report must be <5 seconds.

You create a data source to store the last 6 weeks of data, and create visualizations that allow viewers to see multiple date ranges, distinct geographic regions, and unique installation types. You always show the latest data without any changes to your visualizations. You want to avoid creating and updating new visualizations each month.

What should you do?

- A . Look through the current data and compose a series of charts and tables, one for each possible

combination of criteria. - B . Look through the current data and compose a small set of generalized charts and tables bound to

criteria filters that allow value selection. - C . Export the data to a spreadsheet, compose a series of charts and tables, one for each possible

combination of criteria, and spread them across multiple tabs. - D . Load the data into relational database tables, write a Google App Engine application that queries all rows, summarizes the data across each criteria, and then renders results using the Google Charts and visualization API.

Given the record streams MJTelco is interested in ingesting per day, they are concerned about the cost of Google BigQuery increasing. MJTelco asks you to provide a design solution. They require a single large data table called tracking_table. Additionally, they want to minimize the cost of daily queries while performing fine-grained analysis of each day’s events. They also want to use streaming ingestion.

What should you do?

- A . Create a table called tracking_table and include a DATE column.

- B . Create a partitioned table called tracking_table and include a TIMESTAMP column.

- C . Create sharded tables for each day following the pattern tracking_table_YYYYMMDD.

- D . Create a table called tracking_table with a TIMESTAMP column to represent the day.

Topic 4, Main Questions Set B

Your company has recently grown rapidly and now ingesting data at a significantly higher rate than it was previously. You manage the daily batch MapReduce analytics jobs in Apache Hadoop. However, the recent increase in data has meant the batch jobs are falling behind. You were asked to recommend ways the development team could increase the responsiveness of the analytics without increasing costs.

What should you recommend they do?

- A . Rewrite the job in Pig.

- B . Rewrite the job in Apache Spark.

- C . Increase the size of the Hadoop cluster.

- D . Decrease the size of the Hadoop cluster but also rewrite the job in Hive.

You work for a large fast food restaurant chain with over 400,000 employees. You store employee information in Google BigQuery in a Users table consisting of a FirstName field and a LastName field. A member of IT is building an application and asks you to modify the schema and data in BigQuery so the application can query a FullName field consisting of the value of the FirstName field concatenated with a space, followed by the value of the LastName field for each employee.

How can you make that data available while minimizing cost?

- A . Create a view in BigQuery that concatenates the FirstName and LastName field values to produce the FullName.

- B . Add a new column called FullName to the Users table. Run an UPDATE statement that updates the FullName column for each user with the concatenation of the FirstName and LastName values.

- C . Create a Google Cloud Dataflow job that queries BigQuery for the entire Users table, concatenates the FirstName value and LastName value for each user, and loads the proper values for FirstName,

LastName, and FullName into a new table in BigQuery. - D . Use BigQuery to export the data for the table to a CSV file. Create a Google Cloud Dataproc job to process the CSV file and output a new CSV file containing the proper values for FirstName, LastName and FullName. Run a BigQuery load job to load the new CSV file into BigQuery.

You are deploying a new storage system for your mobile application, which is a media streaming service. You decide the best fit is Google Cloud Datastore. You have entities with multiple properties, some of which can take on multiple values. For example, in the entity ‘Movie’ the property ‘actors’ and the property ‘tags’ have multiple values but the property ‘date released’ does not. A typical query would ask for all movies with actor=<actorname> ordered by date_released or all movies with tag=Comedy ordered by date_released.

How should you avoid a combinatorial explosion in the number of indexes?

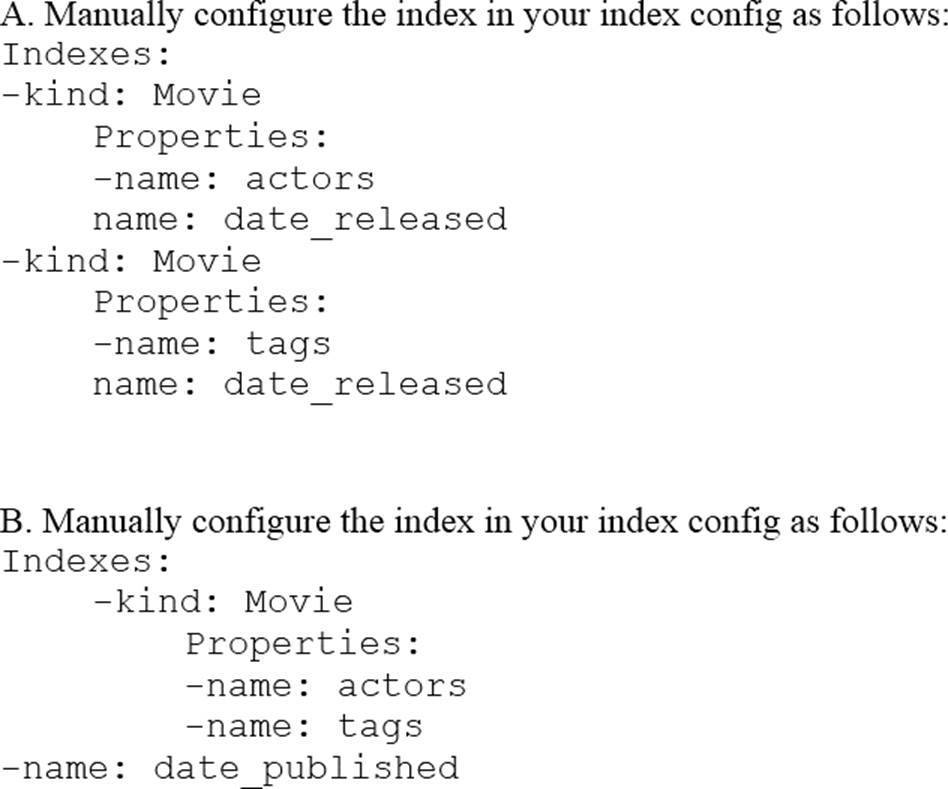

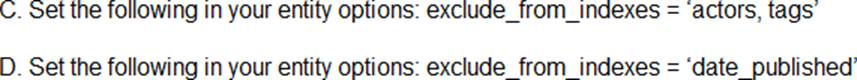

- A . Option A

- B . Option B.

- C . Option C

- D . Option D

You work for a manufacturing plant that batches application log files together into a single log file once a day at 2:00 AM. You have written a Google Cloud Dataflow job to process that log file. You need to make sure the log file in processed once per day as inexpensively as possible.

What should you do?

- A . Change the processing job to use Google Cloud Dataproc instead.

- B . Manually start the Cloud Dataflow job each morning when you get into the office.

- C . Create a cron job with Google App Engine Cron Service to run the Cloud Dataflow job.

- D . Configure the Cloud Dataflow job as a streaming job so that it processes the log data immediately.

You work for an economic consulting firm that helps companies identify economic trends as they happen. As part of your analysis, you use Google BigQuery to correlate customer data with the average prices of the 100 most common goods sold, including bread, gasoline, milk, and others. The average prices of these goods are updated every 30 minutes. You want to make sure this data stays up to date so you can combine it with other data in BigQuery as cheaply as possible.

What should you do?

- A . Load the data every 30 minutes into a new partitioned table in BigQuery.

- B . Store and update the data in a regional Google Cloud Storage bucket and create a federated data source in BigQuery

- C . Store the data in Google Cloud Datastore. Use Google Cloud Dataflow to query BigQuery and combine the data programmatically with the data stored in Cloud Datastore

- D . Store the data in a file in a regional Google Cloud Storage bucket. Use Cloud Dataflow to query BigQuery and combine the data programmatically with the data stored in Google Cloud Storage.

You are designing the database schema for a machine learning-based food ordering service that will predict what users want to eat.

Here is some of the information you need to store:

The user profile:.

What the user likes and doesn’t like to eat

The user account information: Name, address, preferred meal times

The order information: When orders are made, from where, to whom

The database will be used to store all the transactional data of the product.

You want to optimize the data schema.

Which Google Cloud Platform product should you use?

- A . BigQuery

- B . Cloud SQL

- C . Cloud Bigtable

- D . Cloud Datastore

Latest Professional Data Engineer Dumps Valid Version with 160 Q&As

Latest And Valid Q&A | Instant Download | Once Fail, Full Refund